Page History

...

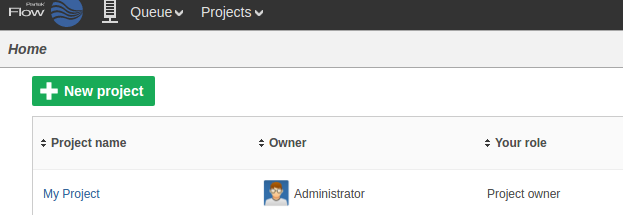

The new project will appear on the Flow homepage:

Upload a group of samples

...

This operation will generate a data node on the Analyses tab for the imported samples:

Assign sample attributes

...

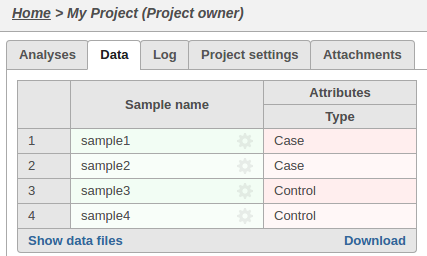

The sample attributes can be viewed and managed on the data tab:

Run a pipeline

...

| Code Block | ||

|---|---|---|

| ||

wget -q -O - http://localhost:8080/flow/api/v1/pipelines/list$AUTHDETAILS | python -m json.tool | gvim -

|

Get Many pipelines also require that library files are specified.

You can get the list of required inputs for the pipeline from the API:

http://localhost:8080/flow/api/v1/pipelines/inputs?project_id=iDEA0&pipeline=AlignAndQuantify

This particular pipeline requires a bowtie index and an annotation model:

The request to launch the pipeline needs to specify one resource ID for each input.

These IDs can be found using the API:

Get the IDs for the library files that match the required inputs

| Code Block |

|---|

wget -q -O - "http://localhost:8080/flow/api/v1/library_files/ |

...

list${AUTHDETAILS}&assembly=hg19" | python -m json.tool | gvim - |

The pipeline can be launched in any project using RunPython.py

| Code Block | ||

|---|---|---|

| ||

python RunPipeline.py -v --server http://localhost:8080 --user admin --password $FLOW_TOKEN --project_id 0 --pipeline AlignAndQuantify --inputs 103,102 |

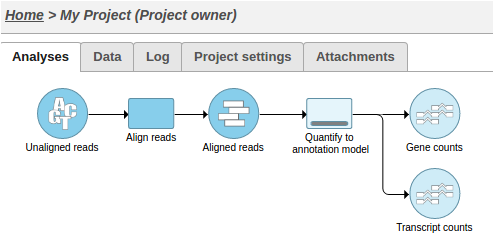

This action will cause two tasks to start running:

Alternatively, UploadSamples.py can create the project, upload the samples and launch the pipeline in one step:

| Code Block | ||

|---|---|---|

| ||

python UploadSamples.py -v --server http://localhost:8080 --user admin --password $FLOW_TOKEN --files ~/sample1sampleA.fastq.gz ~/sample2sampleB.fastq.gz --project NewProject --pipeline AlignAndQuantify --inputs 28061103,145855102 |

Add a collaborator to a project

...